I Built a Private AI on a Raspberry Pi 5. Here Are My 3 Biggest Takeaways.

When you think of a voice assistant, you probably picture a device like Alexa or Siri, a smart speaker or phone that’s perpetually connected to the internet. These assistants rely on massive, distant data centers to process your every command. But what if you could have a powerful, conversational AI that worked entirely offline, with no Wi-Fi and no connection to a remote server?

That’s the premise of a project to build a fully self-contained AI voice assistant named Leela on a simple Raspberry Pi 5. This device can listen, think, and talk all on its own, running everything locally. The process of building it revealed some surprising and impactful truths about the current state of accessible AI.

This post explores the most significant discoveries from that experiment. From the profound implications of AI privacy to the surprising hardware appetite of even “small” models, here are the key takeaways from building a truly independent AI.

1. True AI Independence is Private and Portable

The most profound realization from this project is what “fully offline” truly means. Every single part of the AI’s process—from listening and transcribing your voice to generating a thoughtful response and speaking it back to you—happens directly on the Raspberry Pi. No data is ever sent to a third-party server.

This local-first approach stands in stark contrast to cloud-based services. The benefits are immediate and clear: absolute privacy and complete portability. The project’s creator aptly describes the result as “private, portable, and playful.” This combination of privacy and portability unlocks new use cases impossible for cloud-tethered assistants—from a secure note-taker in a confidential meeting to an interactive field guide on a remote hiking trail. It’s a powerful reminder that as AI becomes more capable, we don’t have to sacrifice our privacy for convenience.

Offline is the new smart.

2. Pocket-Sized AI Has a Surprisingly Big Appetite

While the project successfully runs on a consumer-friendly device like the Raspberry Pi 5, it pushes the hardware to its absolute limits. Building a local AI is not a lightweight task, and the resource demands are a testament to the intense computations required.

For this specific build, the 8GB RAM model of the Raspberry Pi 5 is recommended for a crucial reason: the AI software stack alone consumes around 4GB of memory just to idle. Furthermore, the constant processing generates a significant amount of heat. An active cooler isn’t just a suggestion; it’s a necessity for stable performance.

…this setup gets hot enough to cook breakfast…

This isn’t just a colorful metaphor; it’s a direct indicator of the constant, high-wattage processing required to run a local language model, turning the Pi’s CPU into a genuine hot plate. This intense resource consumption demonstrates that even when scaled down to run on a small board, modern AI models require a surprising amount of power.

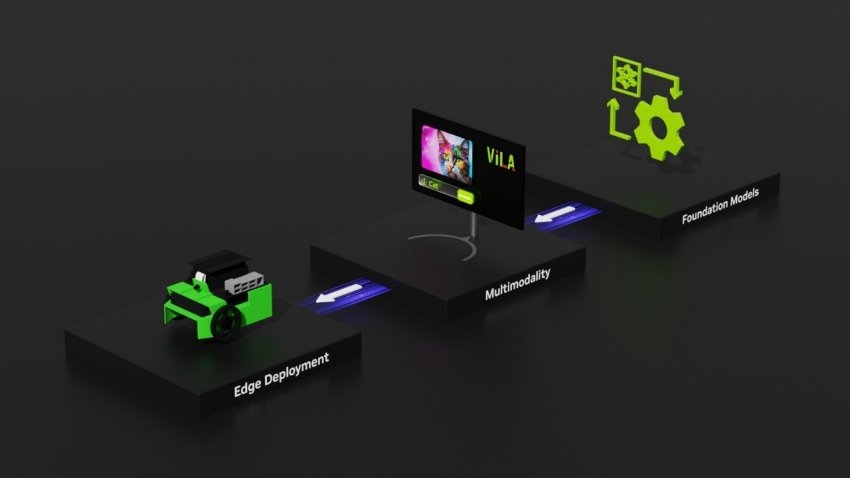

3. The AI is a “Dream Team” of Specialized Open-Source Tools

The “AI” in this voice assistant isn’t one single, monolithic program. Instead, it’s a carefully orchestrated “dream team” of specialized, open-source software, with each component chosen strategically for its specific task. This modular approach is what makes a complex project like this achievable for hobbyists and developers.

The core software stack consists of three main players:

• Whisper: The industry-standard for offline Automatic Speech Recognition (ASR). It handles the “listening” part, accurately transcribing spoken words into text. The

tiny model is used here, a deliberate choice to balance high performance with the Pi’s limited resources.• Ollama (with the Qwen 3 1.7B model): This is the “brain” of the operation. Ollama provides a powerful framework to run local large language models (LLMs) and easily swap them out. The

Qwen 3 1.7B model was a strategic choice, selected specifically because other models tended to “talk too much,” providing a practical lesson in choosing a tool that gives the desired concise output.• Piper: A fast and efficient neural text-to-speech engine. This was chosen because it is highly optimized for ARM-based hardware like the Raspberry Pi, enabling it to convert the LLM’s text response into natural-sounding speech with minimal delay.

This project highlights the critical balancing act of edge AI development: selecting models and tools powerful enough to be useful, but small and efficient enough to run on constrained hardware.

Conclusion: Your Own Private AI is Closer Than You Think

This project proves that powerful, private, and offline AI is no longer the stuff of science fiction. It’s a tangible, DIY reality that can be built with accessible hardware and open-source software. It demonstrates a future where intelligent devices don’t need to be tethered to the cloud, offering users more control, privacy, and flexibility.

As the underlying technology continues to improve and become more efficient, the potential for these kinds of self-sufficient devices will only grow. As the project’s creator states, “I believe the future holds even more possibilities for small self-contained devices like this.”

As local AI becomes more powerful and accessible, what will you build with it?